Most AI tools promise answers.

MiroFish promises something more seductive: a way to rehearse what could happen before the crowd reacts, before the headlines settle and before confidence becomes obvious to everyone else.

That is exactly why MiroFish has exploded across social media. More and more examples are surfacing of people who have already used it to gain a serious advantage. One of the most talked-about cases is user @0p0jogggg, who reportedly cashed over 1.4 million.

That idea is powerful on its own. But what makes MiroFish even more compelling is that it is not really about one magical prompt or one flashy interface. The real power sits in the quality of the input. The better the data you feed it, the more useful the output becomes.

That is the real differentiator.

And for sports users, that opens up a very interesting question: do you build MiroFish yourself for free and shape it entirely around your own needs, or do you choose speed and plug into a setup that already has the right data streams, structure and know-how in place?

What is MiroFish?

MiroFish is an open-source swarm intelligence engine. It was built in China by Guo Hangjiang, also known online as BaiFu, a computer science student. After MiroFish went viral on Github and across social media, the project reportedly secured a 4 million investment from the Shanda Group.

On its official GitHub repository, it is presented as a system that takes seed material from the real world, such as breaking news, policy drafts or financial signals, then builds a parallel digital world where large numbers of agents interact, evolve and generate a report you can explore. The project describes this as a way to "rehearse the future in a digital sandbox."

In simpler terms, MiroFish is interesting because it is not built around a single flat answer. It is built around scenarios. Instead of treating the world like one clean line of logic, it tries to simulate how different actors, narratives and reactions may influence what happens next.

That is why it stands out. It feels less like a standard AI tool and more like a system for pressure-testing possibilities.

The real advantage is not just MiroFish. It is the data you feed it.

This is the part many people underestimate.

MiroFish can be impressive on its own, but the real differentiator is the quality and freshness of the input. Better input creates better simulations, better context and better outputs.

That input can come from several directions. The X API (former Twitter) gives programmatic access to public conversation, posts, users and trends, making it a useful source for real-time sentiment and public reaction. SportsDataIO provides structured sports data through its APIs, including real-time data products, documentation and coverage across major leagues.

That combination is where things start to get powerful.

One stream tells you what people are saying.

The other tells you what is actually happening.

And when those streams are fed into a system built to simulate trajectories rather than just summarize events, the output becomes much more interesting.

Self-deployed versus third-party

At a glance, this looks like a technical choice.

In reality, it is a practical one.

If you self-deploy MiroFish, you get control. You can shape the workflow, connect your own data sources and build around your own use case. The official quick start shows that self-hosting involves setting up the repository, environment variables, model configuration, dependencies and either a source deployment or Docker setup.

That route makes sense for builders.

- +Full control over workflow

- +Custom data source connections

- +Free (open source)

- -Technical setup required

- -Ongoing maintenance

- +Instant access, no setup

- +Pre-built data pipelines

- +Optimized for specific use cases

- -Less customization

- -Subscription cost

But most people searching for MiroFish are not necessarily looking to configure servers or spend their afternoon troubleshooting environment variables. Many simply want to experience what the system can do and see how it could fit into their workflow.

That is where a third-party or hosted layer becomes appealing. It removes the setup friction and gets you to the interesting part faster.

How to deploy MiroFish yourself

If you do want to run MiroFish yourself, the official Github repository makes the path fairly clear.

The listed prerequisites include Node.js 18+, Python 3.11 to 3.12, uv, LLM API configuration and a Zep API key. From there, the repo walks through environment setup, dependency installation and starting both the frontend and backend locally. There is also a Docker deployment route.

- 1Clone the MiroFish repository

- 2Copy the example .env file and add your API keys

- 3Install the Node and Python dependencies

- 4Start the frontend and backend locally

- 5Open the local interface and begin testing your own workflows

So yes, it is absolutely possible. The GitHub documentation is structured well enough that someone with basic technical skills can usually get MiroFish running locally within roughly one to two hours.

But the more important question is whether that is the best use of your time.

If your goal is to build a custom system, self-deployment makes sense. It gives you control, flexibility and the freedom to shape the setup around your exact needs. But if your goal is to get useful outputs quickly, the self-hosted route is often not the most attractive one. Open-source gives you freedom, but freedom comes with friction.

Using MiroFish through third parties

For many users, the appeal of third-party access is simple: speed.

Running MiroFish yourself gives you freedom, but it also asks for setup time, technical attention and ongoing maintenance. That can be worth it for builders. But for most people, the real goal is not to configure a system. It is to get useful output quickly and start exploring what this kind of intelligence can actually do.

That is where third-party tools become interesting. They remove friction, shorten the path to value and usually come with a clearer use case from day one. The key is not just choosing a platform that uses MiroFish. It is choosing the one that fits the kind of outcomes you care about.

For example, mirofish.homes makes sense for users who want to explore broader real-world news scenarios and test how narratives may develop. SafeBet is the more natural fit for sports users who want a workflow built specifically around forecasting, structured signals and fast-moving information.

A sports case study: when better data creates better decisions

Sports is one of the clearest examples of where a MiroFish-style workflow becomes genuinely useful.

Not because there is a lack of information, but because there is usually too much of it. Public sentiment moves quickly. New updates land late. Narratives spread faster than facts. Raw data on its own is not enough. What matters is having the right information, organizing it correctly and cross-checking it before it turns into insight.

That is where a specialized sports workflow starts to stand apart.

Instead of relying on one single input, a strong setup can pull together multiple layers of information: historical performance, current form, matchup context, market behavior, injury updates, public sentiment and live developments close to game time. On top of that, the strongest signals are not taken at face value. They are cross-checked across multiple inputs, helping filter out noise and reducing the chance of overreacting to one isolated piece of information.

That is the real value. Not just more data, but better data discipline.

In that sense, SafeBet is a strong example of what third-party MiroFish integration can look like when it is tailored for a specific niche. Rather than asking the user to build the workflow from scratch, it packages the process into something far easier to use consistently: a sports-focused system where data is continuously evaluated, signals are cross-checked and insights are delivered in a format designed for day-to-day use.

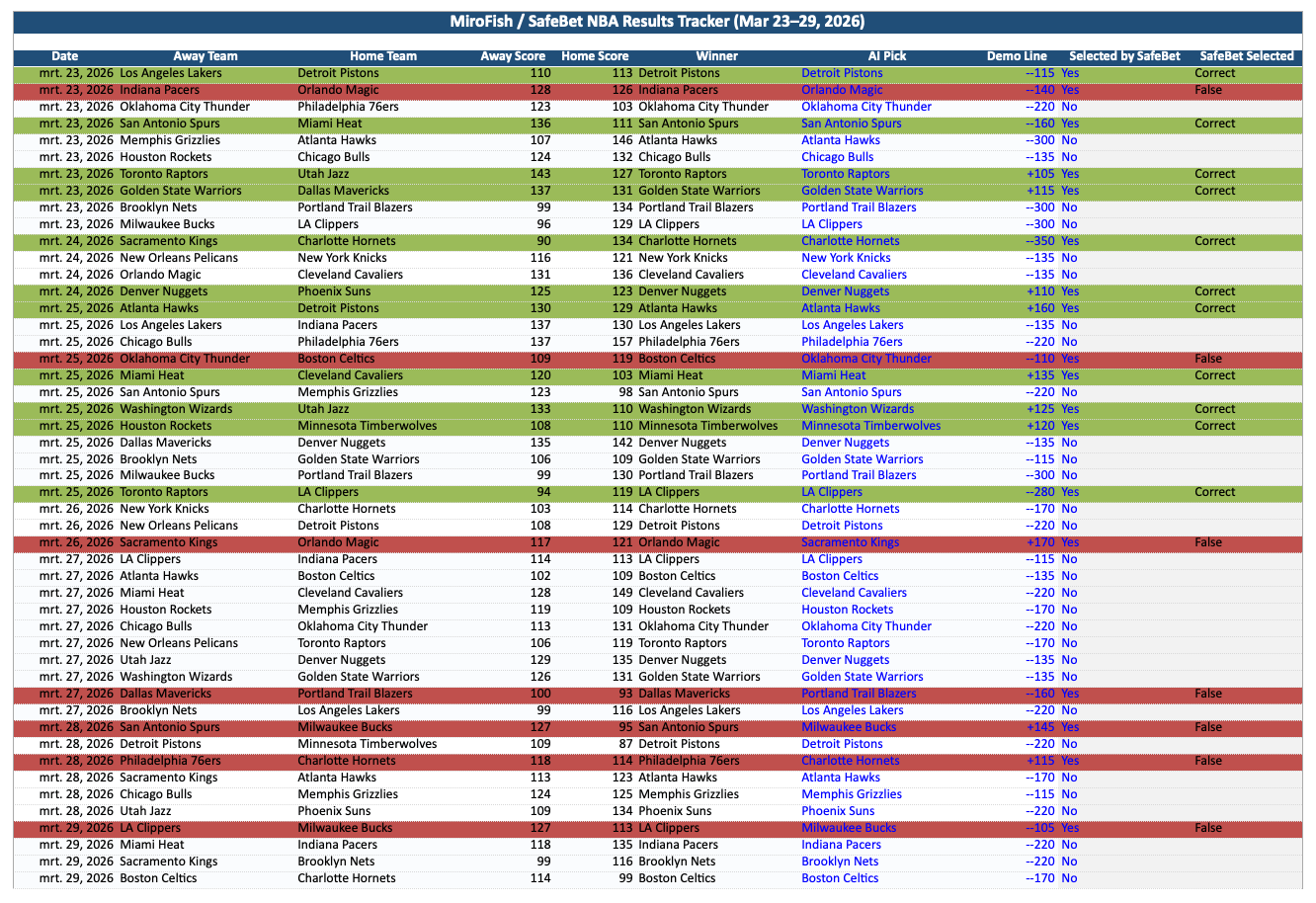

7-day test results

Numbers on their own can be dry. What made this 7-day test interesting was not just the volume, but what happened after the filtering.

Over the course of seven days, 49 NBA games were analyzed. That alone is not impressive. Anyone can throw a large number of games into a model and generate output. The real difference came from the cross-checking. Out of those 49 games, only 18 were confirmed by SafeBet's own AI after being layered against its internal signals. In other words, the system did not just push out everything it saw. It became selective.

And that selectivity mattered.

Of those 18 cross-checked calls, 11 were correct, landing at a 62% accuracy rate. In most industries, that would already be enough to get attention. But what makes this result far more interesting is where those wins came from. Seven of the 11 correct calls were underdogs with a line of +100 or higher.

Try SafeBet with 30% off

Use code BLAUWDRUK at checkout to get 30% off your SafeBet subscription.

That is where the story changes.

It is one thing to be right on obvious favorites. It is another thing entirely to identify value where the market is giving less respect, less confidence and less room for error. Hitting on underdogs at that rate is what makes the result stand out. It suggests that the edge is not just coming from volume or randomness, but from the quality of the filtering and the discipline of the cross-check.

That is also why SafeBet deserves to be taken seriously. The real value is not in flooding the user with endless forecasts. It is in narrowing the field, pressure-testing the signals and surfacing the spots that still look strong after multiple layers of confirmation. That is a very different proposition from simply pushing raw output. It is a more selective approach, and in this 7-day sample, that selectivity is exactly what made the results worth paying attention to.

So which route makes the most sense?

That depends on what you want.

If you want to build, customize and experiment the tech deeply, the MiroFish Github repository is the right place to start. It gives you the engine and the flexibility to shape it around your own stack.

If you want to test the concept quickly, a hosted experience is usually the better entry point. It lowers the friction and helps you understand the value faster.

And if your interest is specifically sports, then the most practical route is often not starting from scratch at all. It is using a workflow that already turns this style of intelligence into something structured, repeatable and easier to act on.

Conclusion

MiroFish is exciting because it points to a different way of working with uncertainty.

Not just more dashboards.

Not just more summaries.

A more dynamic way to explore how situations may evolve.

But the tool alone is only part of the story. The real differentiator is the data you feed into it. Public conversation from sources like the X API and structured sports data from providers like SportsDataIO can dramatically shape the quality of what comes out.

So for most visitors, the path is fairly simple.

If you want to explore the engine, look at the MiroFish Github project.

If you want to understand what this can look like in a real sports workflow, look at SafeBet.

Because the real value is not just seeing what MiroFish is.

It is seeing what it becomes when the right data and the right use case come together.